Every dollar you spend on a Meta or TikTok ad that doesn't convert is a dollar that funded your competitor's growth. Most performance teams know this, but they still bleed budget on underperforming creatives for weeks before the data forces a change. The fix isn't a bigger budget or a smarter bidding strategy. It's a systematic process for identifying what works, shipping more of it, and cutting what doesn't before the damage compounds. This guide walks you through that process, step by step.

Table of Contents

- Assess your current ad performance and creative assets

- Select and prioritize creative testing methods

- Implement high-impact creative changes based on data

- Track, analyze, and repeat for sustained performance lifts

- Why "set-and-forget" ad creative is dead: our hard-won lesson

- Boost your ad performance with CreaBoost tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Audit your creatives | Benchmark and gather all your ad assets before testing, so you know where to focus first. |

| Prioritize smart testing | Use the right creative testing framework for your resource level to quickly identify winners. |

| Improve and iterate fast | Act on test results quickly and systematize creative changes for the best impact. |

| Measure and repeat | Set up a feedback loop to consistently analyze, update, and improve ad performance. |

Assess your current ad performance and creative assets

With your challenges and goals in mind, the next step is to assess your starting point. You can't fix what you haven't measured, and most teams are working from incomplete pictures of their creative performance.

Start by pulling the metrics that actually tell the story. Click-through rate (CTR) tells you if your creative is stopping the scroll. Cost per acquisition (CPA) tells you if the people who click are actually converting. Return on ad spend (ROAS) tells you whether the whole equation is profitable. Frequency tells you how many times the same user has seen the same ad, which is one of the earliest signals of creative fatigue. Hook rate, which measures the percentage of people who watch past the first three seconds of a video ad, tells you whether your opening is doing its job.

Here's a quick reference for the metrics worth tracking at the creative level:

| Metric | What it measures | Warning threshold |

|---|---|---|

| CTR | Scroll-stopping power | Below 1% on Meta |

| CPA | Cost to acquire a customer | Above your target by 20%+ |

| ROAS | Revenue per dollar spent | Below breakeven ROAS |

| Frequency | Audience saturation | Above 3.0 in 7 days |

| Hook rate | First 3-second retention | Below 25% |

| Thumb-stop ratio | Initial scroll interruption | Below 20% |

Once you have the numbers, sort your creative assets into three buckets: top performers (above your ROAS target), middle performers (close but inconsistent), and clear underperformers (burning budget with no signal of recovery). This categorization sounds simple, but most teams skip it and end up optimizing based on gut feel or whoever is loudest in the Slack channel.

Key things to pull together during this audit:

- All active ad creatives with their performance data for the last 30 days

- Any paused ads that previously performed well (these are your templates)

- Notes on which concepts, offers, or hooks were behind each creative

- Any tagging or naming conventions already in place (or the absence of them)

Ad creative analytics tools can surface this data automatically, connecting directly to your ad accounts and tagging assets by format, hook type, and concept so you're not doing this in a spreadsheet. Performance analytics tools can highlight high and low-performing assets in minutes rather than hours.

Pro Tip: Run this audit at the concept level, not just the individual ad level. Two ads with different visuals but the same underlying offer angle should be grouped together. If the concept is weak, swapping the visual won't save it.

Select and prioritize creative testing methods

Now that you've reviewed your existing assets, it's time to decide how you'll approach creative experimentation. Not every testing method fits every team or every budget, and choosing the wrong one wastes time you don't have.

Split testing (also called A/B testing) is the simplest approach. You run two versions of an ad against each other, changing one variable at a time. It's slow but clean. The insight is unambiguous because only one thing changed. This works well when you have a clear hypothesis and enough budget to get statistical significance on each variant.

Multivariate testing lets you test multiple variables simultaneously. You might test three hooks, two visual styles, and two calls-to-action at once. The combinations multiply fast, which means you need more budget and more traffic to get reliable results. The upside is that you learn more in a single cycle. The downside is that isolating what actually caused a lift gets complicated.

Dynamic Creative Optimization (DCO) is Meta's native feature that automatically combines your creative components (images, headlines, descriptions, CTAs) and serves the best-performing combinations. It's fast and low-effort, but the platform controls the learning, which means you don't always get clean data you can act on for the next cycle.

| Testing method | Speed | Cost | Insight depth | Best for |

|---|---|---|---|---|

| Split (A/B) test | Slow | Low | High | Single variable hypotheses |

| Multivariate | Medium | Medium | Medium | Multiple variables at once |

| DCO | Fast | Low | Low | Scaling known concepts |

Systematic creative testing identifies top-performing assets more quickly than any intuition-based approach. The teams that win aren't necessarily running more tests. They're running better-structured tests with clearer hypotheses going in.

To choose the right method, ask yourself three questions. First, how much budget can you allocate to testing without cannibalizing your proven campaigns? Second, how quickly do you need results? Third, how important is it that you understand why something worked, not just that it worked?

Steps to set up a structured testing cycle:

- Define a single learning objective for the cycle (e.g., "Which hook format drives the lowest CPA?")

- Select the testing method that fits your budget and timeline

- Set a minimum spend threshold before you evaluate results (usually $50-$100 per variant)

- Document your hypothesis before launching so you can compare it to what actually happened

- Schedule a fixed review date so tests don't run indefinitely

AI-powered creative generation makes it faster to produce the variants you need for structured tests, so you're not waiting on a design queue every time you want to run a new hypothesis.

Pro Tip: During your busiest sales periods (think Q4 or major promotions), default to simple A/B tests on a single variable. Multivariate tests during high-traffic periods produce noisy data because audience behavior shifts with the season.

Implement high-impact creative changes based on data

Once you've selected a testing method, it's crucial to act quickly on what the data tells you. Insight without action is just an expensive observation.

Focus your creative changes on the elements that move the needle most. In order of typical impact:

- The hook: The first 3 seconds of a video or the headline of a static ad. This is where most creative performance is won or lost. A weak hook means nobody sees the rest.

- The offer: What you're promising the viewer. "Free shipping" and "30% off" can perform dramatically differently on the same product to the same audience.

- The visual style: UGC (user-generated content) versus polished brand creative versus meme-style formats. Each resonates differently depending on the platform, the product, and the moment.

- The call-to-action (CTA): "Shop now" versus "Learn more" versus "Claim your discount" can shift conversion rates meaningfully, especially on lower-funnel campaigns.

The mistake most teams make is treating creative updates as one-off tasks rather than a system. When a test surfaces a winner, the next step isn't just to scale that ad. It's to extract the principle behind why it won and apply it to the next round of briefs.

Quick implementation of creative insights leads to measurable increases in ad performance. The teams that move from insight to live ad in 24 to 48 hours consistently outperform teams that take a week to get through approvals and revisions.

To speed up creative iteration, build a lightweight approval process with no more than two review steps. Creative directors review for brand safety. Media buyers review for platform compliance. That's it. Everything else is a delay.

Pro Tip: When a creative concept wins, immediately build a template from it. Lock the structure (format, pacing, visual zones) and swap only the offer or hook in the next round. This is how you scale a winning formula without rebuilding from scratch every cycle.

Track, analyze, and repeat for sustained performance lifts

Updating your creative is only effective if you can measure and repeat your success. A single good test cycle doesn't build a competitive advantage. A repeatable system does.

Here's a step-by-step process for establishing an ongoing analytics loop:

- Set a weekly review cadence. Every Monday, pull performance data from the previous week. Flag any creative where CPA has risen more than 15% week-over-week or where frequency has crossed 3.0.

- Categorize results by concept, not just by ad ID. Group ads by the underlying angle or hook type so you can see patterns across campaigns, not just individual winners.

- Feed insights directly into the next brief. The question isn't "which ad won?" It's "what does this tell us about what our audience responds to right now?"

- Archive, don't delete. Creatives that underperformed in one context sometimes work in a different placement, audience, or season. Keep a searchable record.

- Set fatigue alerts. Don't wait for CPA to spike before you notice a creative is burning out. Frequency and hook rate degradation typically show up 7 to 14 days before the headline metrics move.

"Your winning creative today is your fatigued creative next month. The brands that treat performance as a snapshot rather than a moving target are the ones that get surprised by rising CPAs every quarter. The brands that build measurement into their weekly rhythm are the ones that stay ahead of it."

Ongoing performance measurement ensures optimization efforts don't stall after the first round. The compounding effect of a tight feedback loop is significant. Teams that set up ongoing analysis and act on it weekly can cut wasted spend by identifying fatigue before it fully sets in, often saving a week or more of budget on burned-out assets.

What to do when your best ads stop working: don't try to resuscitate them. Extract the winning elements (the hook structure, the offer framing, the visual style), rebuild with fresh execution, and test the new version against a different concept entirely. Chasing a dying creative is one of the most common ways teams lose ground during competitive periods.

Why "set-and-forget" ad creative is dead: our hard-won lesson

Here's the uncomfortable reality most articles won't say directly: the biggest threat to your ad performance isn't your competitors' budgets. It's your own complacency about creative refresh.

We've seen it consistently. A team finds a winning creative, scales it hard, and then assumes the work is done. Six weeks later, CPAs are up 30% and the first instinct is to blame the algorithm, the audience, or the season. The actual culprit is almost always the same: the creative got stale, and nobody built a system to catch it.

The conventional wisdom is to "test and learn." The reality is that most teams test once, find something that works, and then stop testing until the pain forces them back. That's not a learning loop. That's a reactive cycle.

The brands that consistently outperform their benchmarks aren't running more ads. They're running a tighter creative refresh cycle. They treat every winning ad as a temporary asset with a shelf life, not a permanent solution. They build rapid creative refresh into their weekly workflow, not as a crisis response but as standard operating procedure.

The other thing worth saying plainly: intuition is not a substitute for data at scale. Your media buyer's gut feeling about which hook will win is worth testing. It is not worth betting your weekly budget on without validation. The teams that build systematic, data-driven creative processes consistently outperform the ones that rely on experience and instinct alone, not because experience doesn't matter, but because the data catches what experience misses.

Pro Tip: Treat your creative pipeline the same way you treat your media budget. Set a fixed percentage of your production capacity for net-new concepts each week, even when your current ads are performing well. The best time to develop your next winner is before you need it.

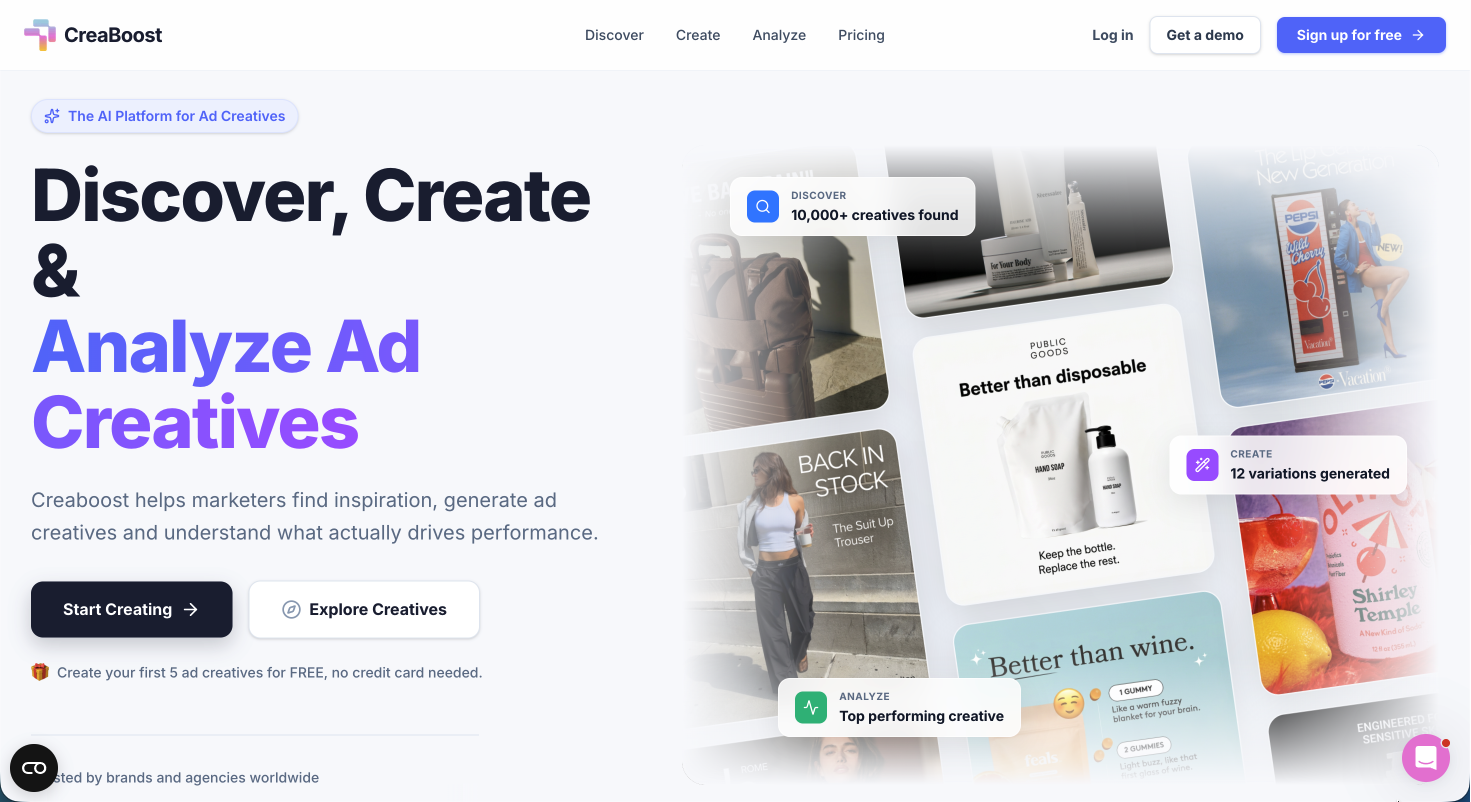

Boost your ad performance with CreaBoost tools

You're now ready to implement the entire process, and CreaBoost can help make every phase easier.

If the framework in this article sounds right but the execution feels like it would take three tools and a half-day of manual work, that's exactly the problem CreaBoost is built to solve. You can analyze creative performance directly from your ad accounts with auto-tagging by hook, format, and concept, so the weekly review cadence described above takes minutes instead of hours.

When it's time to ship new variants, AI creative generation turns a product URL into platform-ready static ads across every Meta and TikTok format you need, batch-resized and on-brand. No designer round-trips. No queue delays. Just faster iteration on the concepts your data says are worth testing. Check CreaBoost pricing to find the plan that fits your team's scale and cadence.

Frequently asked questions

What is the fastest way to identify my top-performing ad creatives?

Use a creative analytics tool that connects directly to your ad accounts and automatically surfaces your highest-performing assets by ROAS, CPA, and hook type, without manual tagging or spreadsheet work.

How often should I update ad creatives on Meta and TikTok?

Update creatives every 2 to 4 weeks or whenever you see frequency climbing above 3.0 or CPA rising more than 15% week-over-week. Ongoing performance measurement ensures you catch fatigue before it shows up in your headline numbers.

What should I test first when trying to boost ad performance?

Start by testing new hooks or opening visuals since these drive the largest lift in engagement and CTR. Quick implementation of creative insights leads to measurable performance increases, and the hook is where most of that leverage lives.

Can AI help with creating better ad creatives?

Yes. AI-powered creative tools accelerate the production and iteration of new ad concepts, letting your team test more hypotheses per cycle without adding headcount or extending timelines.